NEVERIX

Portfolio

Navigation

Programming

I have two Github accounts. Most of the important projects are on the new one, and the ones on the old profile are contained in the front page.

Hackathons

So far, I have participated in two offline hackathons and multiple online ones.

MIT Reality Hack

Together with my team, I made an XR project named draft360 in two days. We won the Best of AR and Best in Tools awards. I worked on the WebXR frontend and backend.

| Project video |

MLHack 2020

I participated primarily online. We won first place in sales forecasting using a custom SVM-based model.

- MLHack 2020

- Task explanation

- Task file

- Solution Colab

- Solution file

- Solution explanation

- Article about the results

COVID-19 Global Hackathon

I started gathering information about my family a year ago

and found it hard to organise and visualise. I decided to

participate in the COVID-19 Global hackathon and create a

tool that makes it simple to create family trees and learn

pysimplegui.

| Project video |

Pulkovo Hack

Pulkovo Hack was an online hackathon hosted by Practicing Futures. I participated in it with a program for scheduling and won first place.

|

|---|

| Certificate |

MultiTechBattle

MultiTechBattle was a competition on the intersection of biotechnology and informatics, also hosted by Practicing Futures and Biowalks. I was tasked with analyzing algae images and creating plots of their activity. I also created the controller system responsible for making the conditions suitable for growth. I reached the finals and got certificates for participation.

|

|---|

| Certificate |

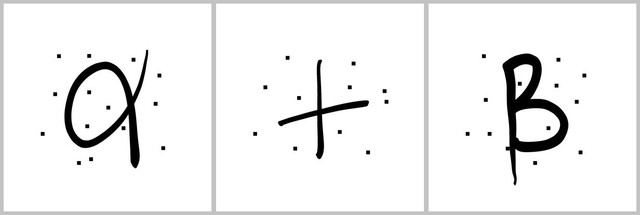

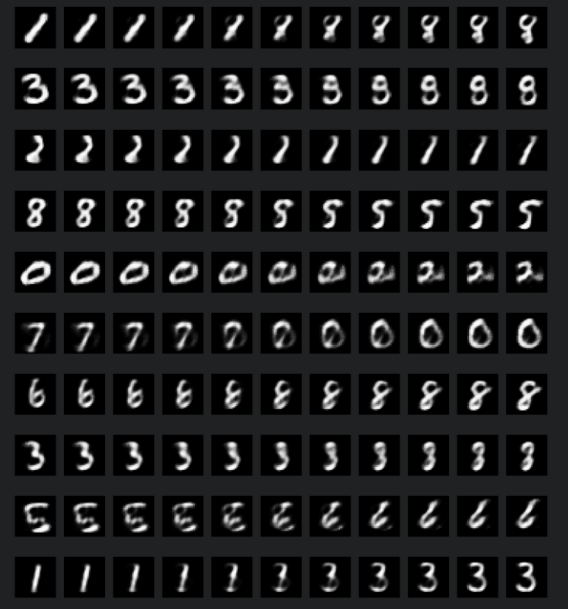

CVML Hack

CVML Hack was a machine learning hackathon that focused on computer vision. The task was digit and mathematical sign recognition. Our team tied for first place.

|

|---|

| Example from the task |

Bilet Hack

Simultanerously with CVML Hack, I participated in Bilet Hack without a team and reached the finals with a Streamlit data app.

|

|---|

| Certificate |

Agro Hack

I participated in Russia’s first argricultural hackathon, Agro Code. The task was apple leaf disease detection. I also created a Streamlip app, it is available online. In this hackathon, I experimented with thresholding and model explanation. I also learned how to deploy models to low-end hardware.

ADT HACK WEEK

After I went on to the finals of a national programming competition called NTI I received an invitation to a series of hackathons from the Academy of Digital Technologies (ADT).

I worked in a team to create an augmented reality checkers game. I worked on the computer vision component and used OpenCV to recognize the chess board and the piece (we only had time for one). We won first place in the hackathon.

|

|---|

| Certificate |

Projects

This is a quick summary of the projects on my Github. To see a full list, please look at my profile.

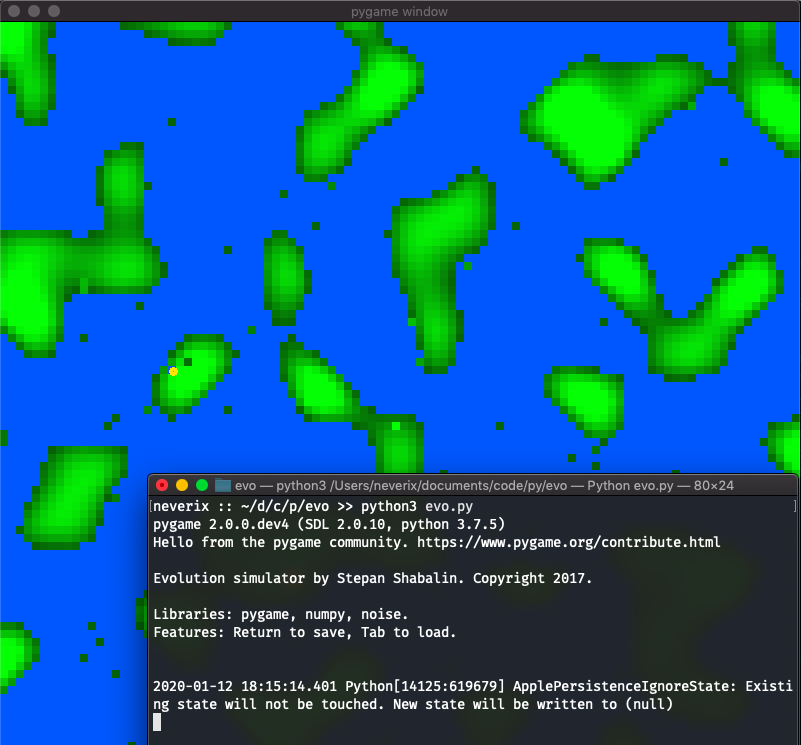

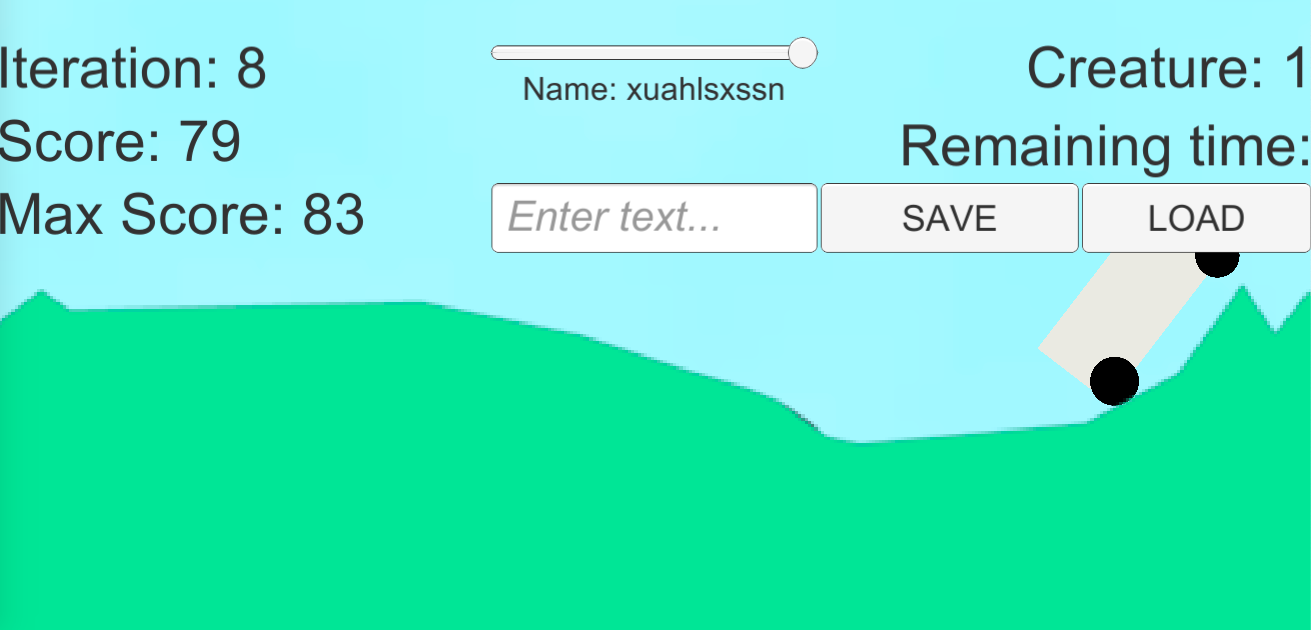

Evolution simulator

I created two evolution simulators (EVO and EVO 3.0) as a school project in late 2017. They are available at neverik/evo and neverik/evo3.0.

|

|---|

| First version |

|

|---|

| Third version |

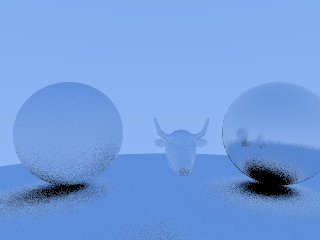

Raytracing

I made a path tracing-based renderer in the summer of 2019. Its source code is available on Github.

|

|---|

| A test render. |

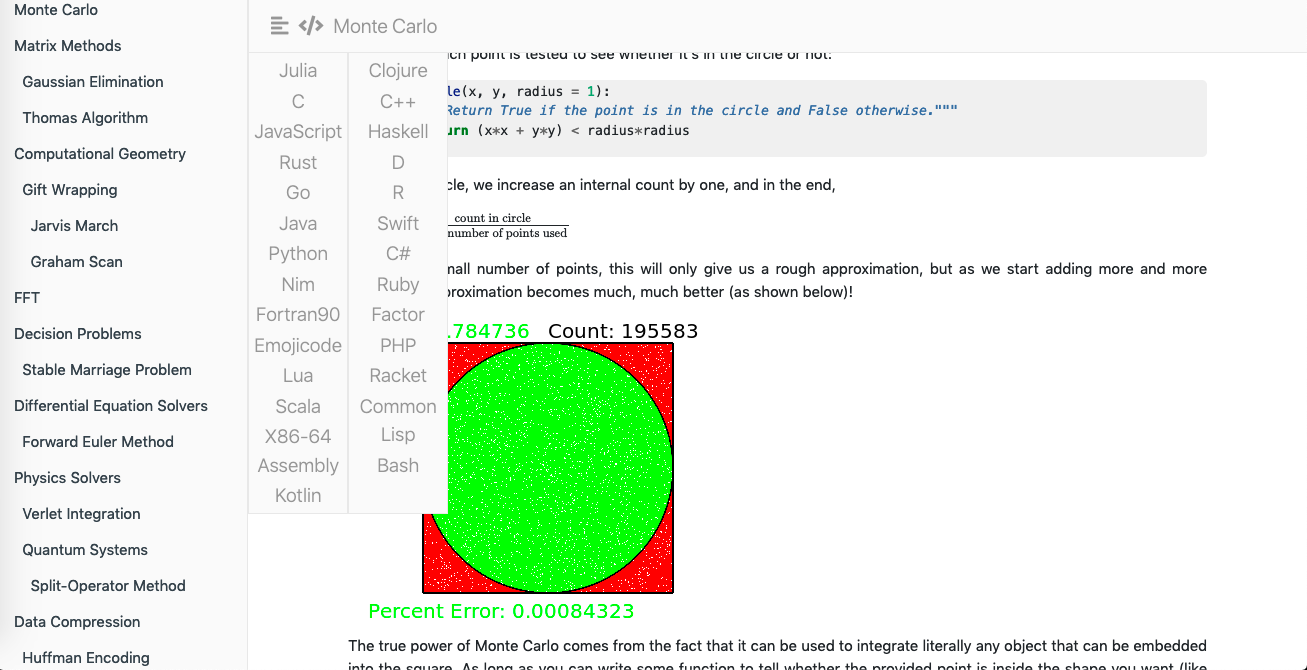

Arcane Algorithm Archive

I participated in the development of AAA, a book which aims to be an archive of important algorithms and their implementations in different languages. In particular, I have contributed Smalltalk implementations and a Python-based alternative for the official build system, based on an outdated version of gitbook. The original book can be accessed at algorithm-archive.org. My own version is on my Github.

Here’s a discussion about the build system.

|

|---|

| aaa-py |

Russian AI Cup

In November-December 2018, I participated in a competition named Russian AI Cup (RAIC). I made several solutions for it and contributed some packages to the Python Dockerfile.

|

|---|

| RAIC 2018: CodeBall |

Deep Learning

Some deep learning/artificial intelligence projects I made in summer 2018.

Autoencoding

Experiments with using a special type of neural network (Autoencoders) to create procedural animations. I didn’t find any useful applications for the technology, but I found the results interesting. The source code can be found on my Github.

|

|---|

| Animation results |

Financial prediction

A simple LSTM-based financial prediction model. I’m not sure if it’s useful, but it’s there and achieves 80% accuracy on training data.

Evolutionary algorithms

Since EVO 1.0 and 2.0 failed, maybe neural network-based evolutionary simulators don’t even work? I tried making one, but it didn’t learn.

Note from future me: I actually didn’t implement a full genetic algorithm with crossover and multiple species because I thought it would be less useful for neural networks. I’ll need to conduct further experiments to see whether it’s possible to get improvements that way.

Voice conversion

I developed several methods for voice conversion (speech style transfer).

- Original method (Autumn 2019, didn’t work correctly)

- QuartzNet method (June 2020 didn’t generalize)

- Experiments in style transfer (June-July 2020)

I finished the project in a private repository using the time warping method implemented in /vconvo.

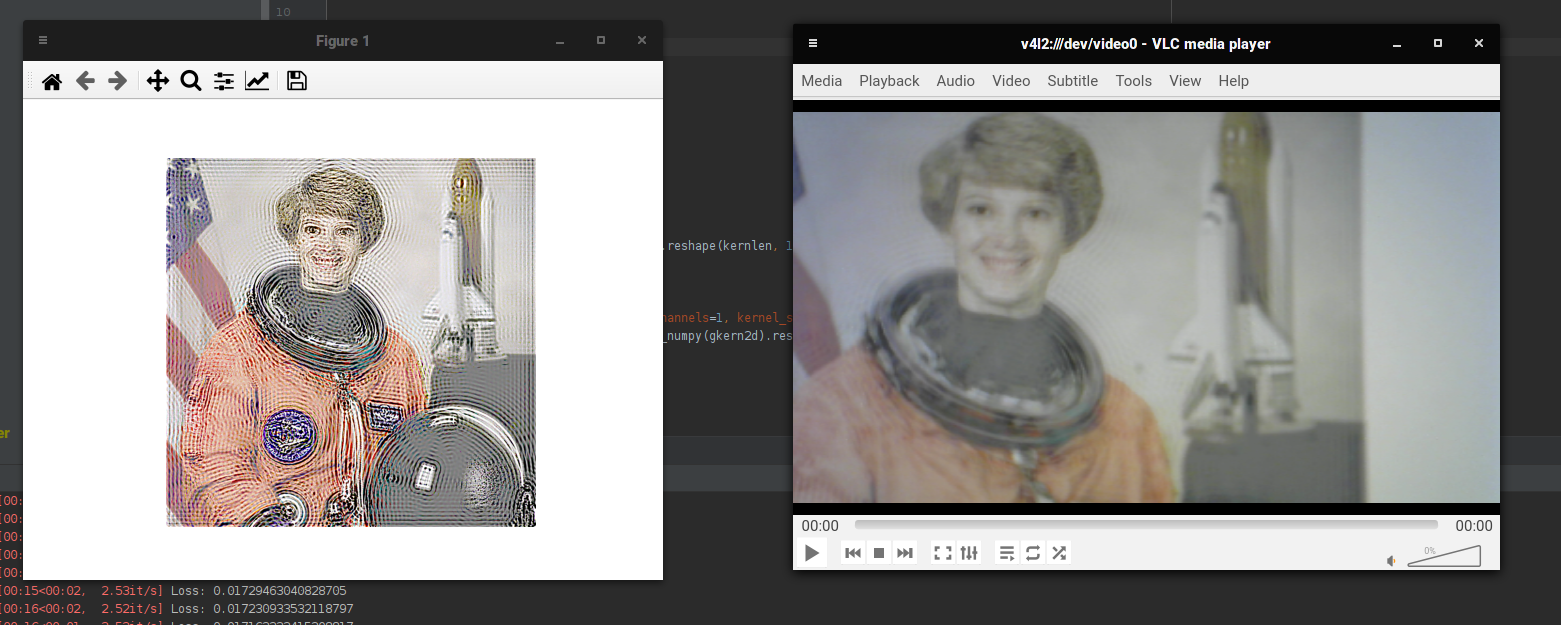

Undefocus

In October 2020, I was researching VR, and specifically VR optics. I was looking for a way to create a VR display without lenses and created an algorithm that could pre-process images to get rid of defocus blur computationally.

|

|---|

| Preprocessed image in focus; in defocus. |

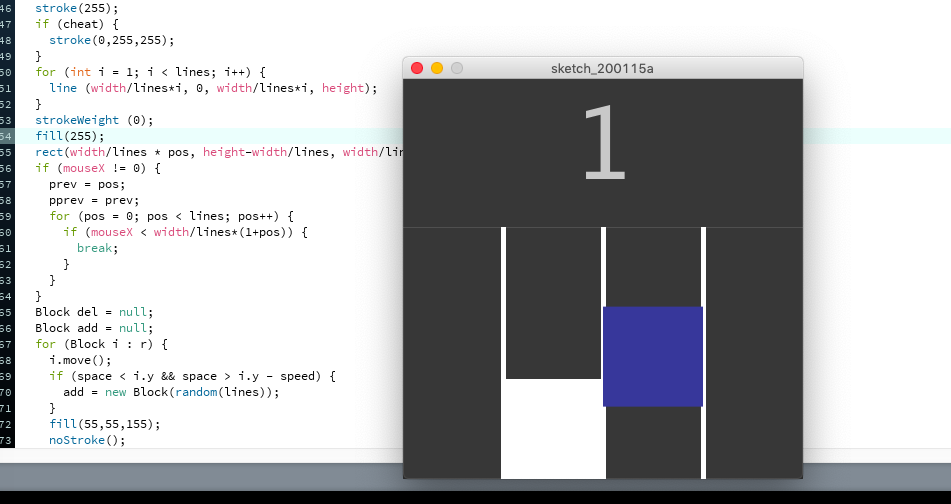

Processing and Android

Processing is a creative coding environment. I have used it on my Android phone during the 2017-2018 school year to create several sketches like games, simulations and generative art. The source code is available at neverik/android-processing for Android sketches and neverik/processing for Processing ones.

|

|---|

| One of my Processing games |

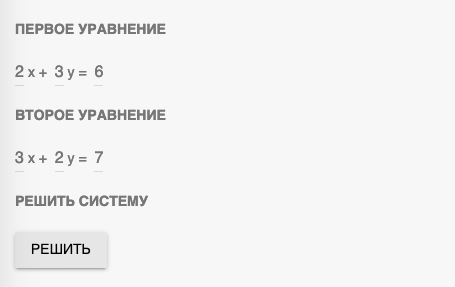

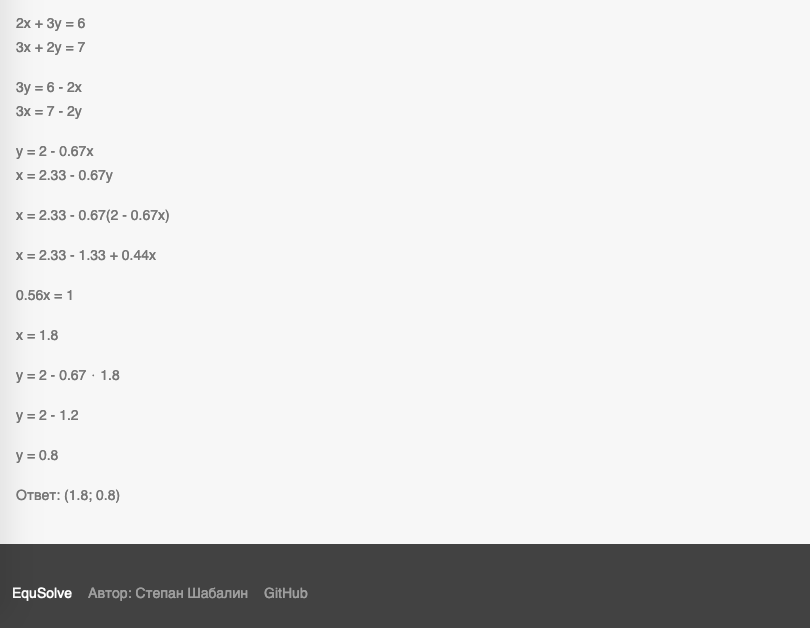

Equation solver

In the end of the 2018-2019 school year, students of the 7A class had to make a project in their speciality of interest and then present it to the class. Since we studied linear equations in our algebra class, I decided to make a linear equation solver as a project. The source code is available at neverix/equsolve and a live demo is on Github Pages.

|

|---|

| Parameters for the solver |

|

|---|

| A detailed solution |

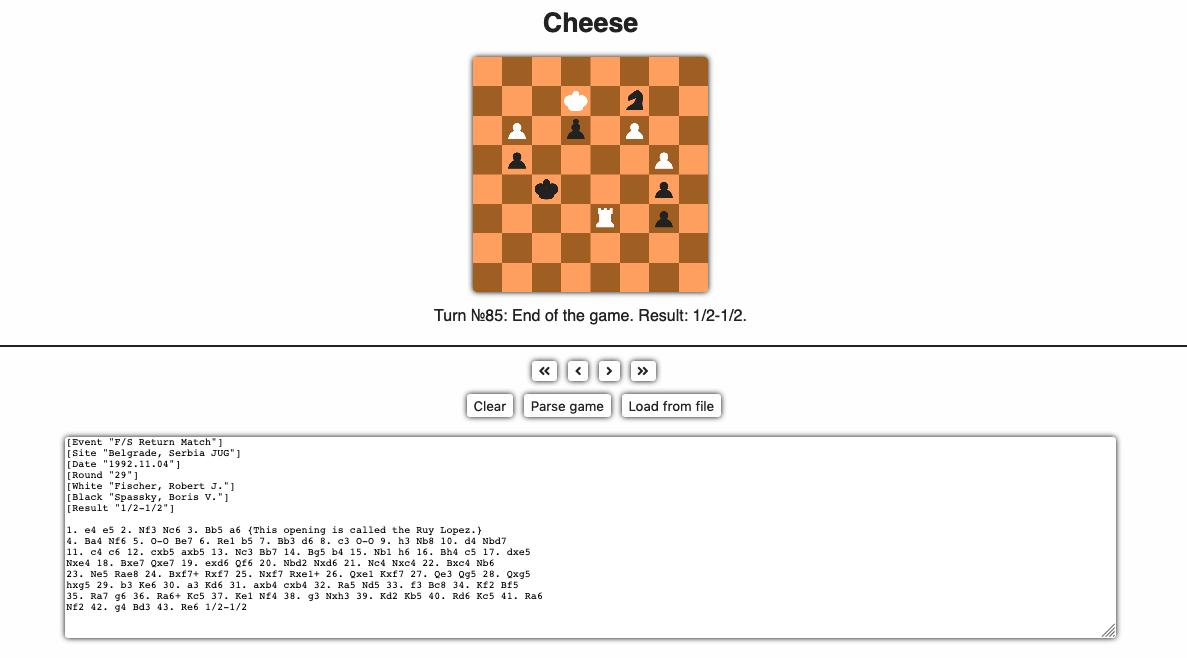

Cheese

When I was researching chess AI in the end of 2019, I found several games in the chess algebraic notation. I don’t play chess, so it was hard for me to read them. I decided to make a chess game visualizer and called it Cheese. The source code is at neverix/cheese and a live demo is on Github Pages.

|

|---|

VR Caterpillar Simulator

This is my first VR project. I never finished it, but at least it exists. It’s a “Caterpillar Simulator” VR game for mobile devices. I created it using the Unity game engine. It was made mostly in summer 2017. One of the lessons I learned was not to rely too much on pre-made assets for 3D models, because the game turned out having no consistent art style. The (unfinished) source code is available at neverik/vr.

|

|---|

The start of the game. The player can look down to walk (since this is a Cardboard game, all interaction is gaze-based) and has to eat… Mushrooms? So they don’t starve.

|

|---|

The boat minigame. The player has to steer a boat (actually, it was intended as a wooden chip, but I for some reason I called it a boat in the scripts).

|

|---|

Near the end of the boat minigame, the player approaches a tree. Then the game makes them jump out of the boat onto the ground. After playtesting this part, I learned another lesson: never control the player’s movement in a VR game.

|

|---|

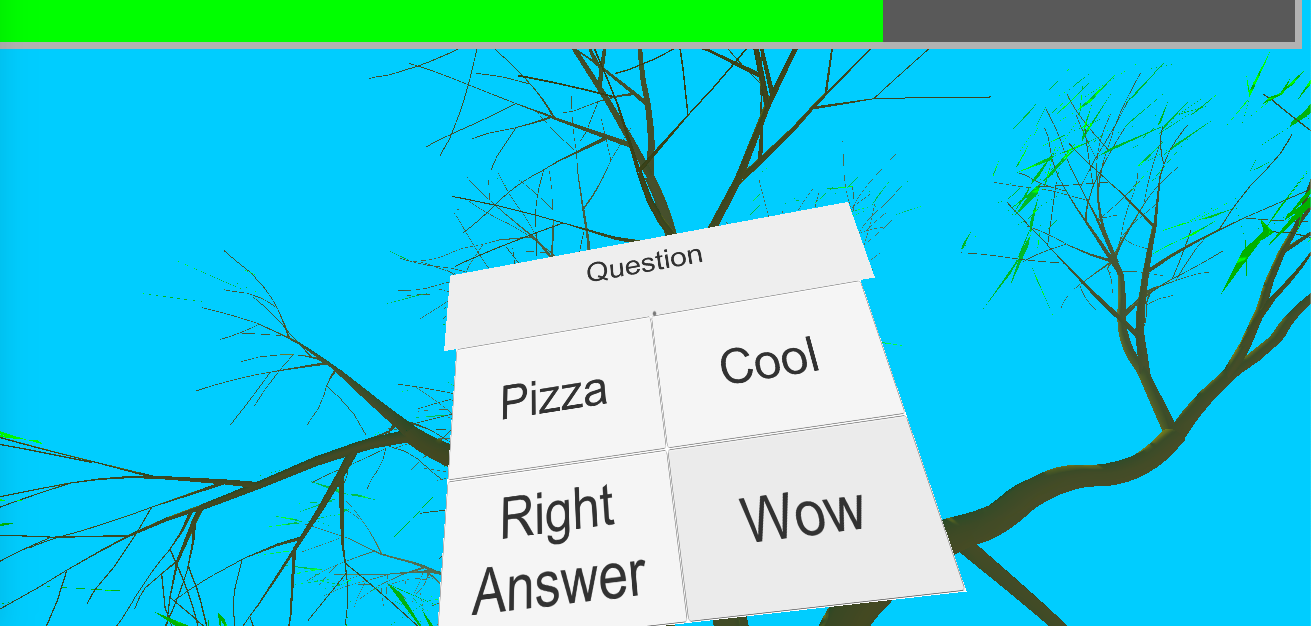

Now the player has to reach the tree, climb up and become a butterfly. Oh no! A spider approaches the player and blocks their way to the tree! Looks like its model didn’t load.

|

|---|

It starts asking questions. I didn’t make up the questions themselves, but they would probably be generic trivia.

|

|---|

After the player answers the spider’s questions, the spider jumps out into the nothingness and frees the path. Since the spider model didn’t load, it looks like the collider stopping the player still exists. If that didn’t happen, the player would climb up their tree (again, out of their control), turn into a butterfly and get chased by a bird - which is the last thing I made.

Game Jams

I have participated in many game jams over the years. Most of my jam games were made for Ludum Dare and Mini Jam.

This portfolio is a WIP!

I’m still adding entries.